Decision Tree algorithm applies tree like structure graph or model of results and an array of significance and clue of occurrence effects, reserve costs and user satisfaction index. Technically, this is a way of a “selection” algorithm (that is, the use of conditional statements). Principally, this is a widely adapted method in operations research, particularly in decision analysis towards identifying the best strategy to a means. It is also a widely recognized mechanism in machine Learning.

Synopsis

Decision tree in machine learning has a flowchart-like build, where an internal node has a “test” on a specific attribute (e.g. whether a door opens or closes). For each anticipated event, is an outcome of a door, and each node stands as a class label (decision adapted after calculating all characteristics). Paths from root to leaf stands as classification compartments. In decision analysis, decision tree is close to influence diagram, widely applied in analytical decision support equipment, where anticipated figures (or user satisfaction) of parallel options are computed.

Components of a Decision tree include but not limited to:

- Decision nodes:-usually signified by squares, b. Chance Nodes: indicated by circles, c. End nodes: shown by triangles.

Decision trees are applied in operations research, and management. For instance, in real time, Decision trees are usually are taken online without regard to incomplete knowledge, paralleled by a probability model such as conditional probabilities. Decision trees have direct impact on influence diagrams, utility functions and several other decision analysis tools and methods. Direct application of decision trees can be seen in business, health economics, public health research or management.

In machine learning, during Classification, data slated for mining could usually divided into:

- Fine tree

- Medium Tree

- Coarse Tree

These categories of tree based mining usually produce similar or same output results.

Structuring data into regression and models targeting data mining is a good strategy for training datasets for best accuracy and feature selection. A decision tree algorithm in Machine Learning, does a set of iterative steps before it comes to a logical conclusion when plotted on screen.

For instance, a recently culled data from CDC.gov with columns or feature labels,

| Year, StateAbbr, StateDesc, CityName, GeographicLevel, DataSource, Category, UniqueID Measure, Data_Value_Unit, DataValueTypeID, Data_Value_Type, Data_Value, Data_Value_Footnote_Symbol, Data_Value_Footnote, Low_Confidence_Limit, High_Confidence_Limit, PopulationCount, GeoLocation, CategoryID, MeasureId, CityFIPS TractFIPS, Short_Question_Text

|

Data-set Source: Center for Disease Control & Prevention. 2016. 500 Cities: Binge drinking among adults aged >=18 years | Chronic Disease and Health Promotion Data & Indicators. [ONLINE] Available at: https://chronicdata.cdc.gov/500-Cities/500-Cities-Binge-drinking-among-adults-aged-18-yea/gqat-rcqz/data. [Accessed 1 February, 2018].

The total dataset in excel is over twenty-five thousand (25,000), but I split the contents for training (50 datasets) and the rest for prediction analysis.

Discriminant Analysis and Nearest Neighbor classification cannot be applied to this raw data default predictors because the features needed is not visible or is blurred.

Before training with a tree based algorithm:

Image showing the default predictors of data from raw CDC

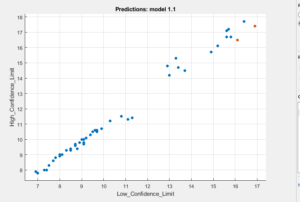

Image showing the relationship between Low_Confidence_Limit, & High_Confidence_Limit

The columns Low_Confidence_Limit, High_Confidence_Limit, seems to be unique features of this data drawn from CDC.

Applying, all the above decision tree algorithms (Fine, Medium, Coarse tree) yields:

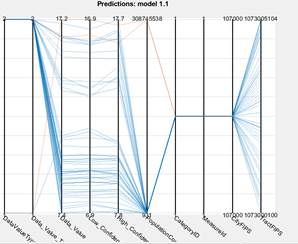

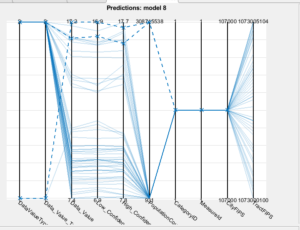

Observing the Parallel Coordinates Plot, under the decision tree algorithm shows:

Using the tree algorithm for machine learning, I observed something is going on around:

| DataValueTypeID, Data_Value_Type, Data_Value, Data_Value_Footnote_Symbol,Data_Value_Footnote, Low_Confidence_Limit, High_Confidence_Limit, PopulationCount, GeoLocation, CategoryID, MeasureId, CityFIPS TractFIPS data columns respectively.

|

So, I decided to remove the other features, focusing on these ones. In addition, I introduced principal component analysis to reduce dimensionality.

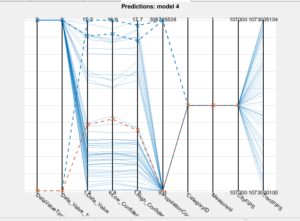

Furthermore, reducing the noise in this dataset, using:

| Data_Value,Low_Confidence_Limit,High_Confidence_Limit, PopulationCount, GeoLocation, CategoryID, MeasureId, CityFIPS, TractFIPS data columns respectively. |

Yeilds a better model:

The average accuracy computed on tree decision algorithm on this dataset is 95.5%.

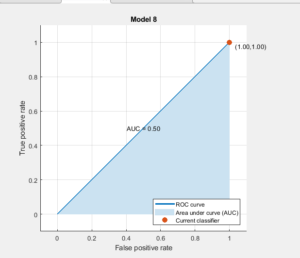

The final image depicted above shows an activated principal component analysis (pca) with a decision tree algorithm on the CDC data above . In this instance, there is a true positive rate of 1.0 (100%) truly classified number of observatons or outcomes of this data.

Now, with a computed accuracy of 95.9%, a well enhanced data feature that classifies the most important part of this data needed to be mined and translated to useful patterns, situational awareness for making useful decision/policy making.

About the Author: Oluwatobi Owoeye has over seventeen years’ experience in Computer Systems and Technologies with several evidence based results. He has worked in several Information Technology projects. A published Author and currently specializing in Robotics & Mobile Computing: Analytics, Computer Vision, Distributed & Parrallel Computing, and Machine Learning.

Published Books: Click Here

LinkedIn Professional Network: Click Here for LinkedIn Profile